Introduction

Any webpage you visit will have some form of performance delay. This can range from the web page taking a long time to load to specific parts of the page not rendering correctly. So the question is, how do you stop this from happening and how do you improve the performance of the webpage?

Firstly, when any code is written, a code review should be conducted (if possible). This allows for a second pair of eyes to review the code, increasing the likelihood of spotting any major issues. This can be applied to the codebase as well; a general review of the codebase can help spot key performance issues.

One of the tools I found useful for reviewing high-risk areas was Google Chrome’s dev tools. Under its performance section, select the circle arrow, wait for it to run and then select bottom-up.

Here you can see timing on specific parts of code and what takes the most time to run during the page load. This is just one of the tools you can use for checking areas of code which might be causing issues.

Other tools I have found useful for measuring general performance results are New Relic, Jmeter and Matomo. Each measure performance in a slightly different way but all are very useful for working out chokepoints or bottlenecks in performance.

Server related issues could also have an impact on performance, i.e does the server have the required amount of resources available to handle the requests. Even the likes of network latency could greatly affect the overall load time.

How do we measure the performance of our sites?

As stated earlier Matomo can be used to measure the performance of a site. Built into Matomo is a page performance section. This records the time taken between a web page making a request for the HTML and receiving it. The slight problem with this is that Matomo requires a user to navigate the site in order to record this data.

In order to solve this, we use a tool called WebdriverIO. WebdriverIO is a tool used for automation within a browser, using this I create a specific series of steps that the software will follow. This allows me to trigger the Matomo recording without having to interact with it manually. i.e navigate to this page and interact with a certain object.

Using a Jenkins job (Jenkins helps to automate the non-human part of the software development process, with continuous integration) we can run this performance test against our own sites with little human interaction. Firstly, we would provide a release tag (containing the code changes we want to make), and then run the test again in Jenkins. This would then start a build of a separate internal site. Once the build is completed the performance tests would be run against this site and the results would be recorded. This all runs in the background and the final results are stored in GITHUB for further reporting.

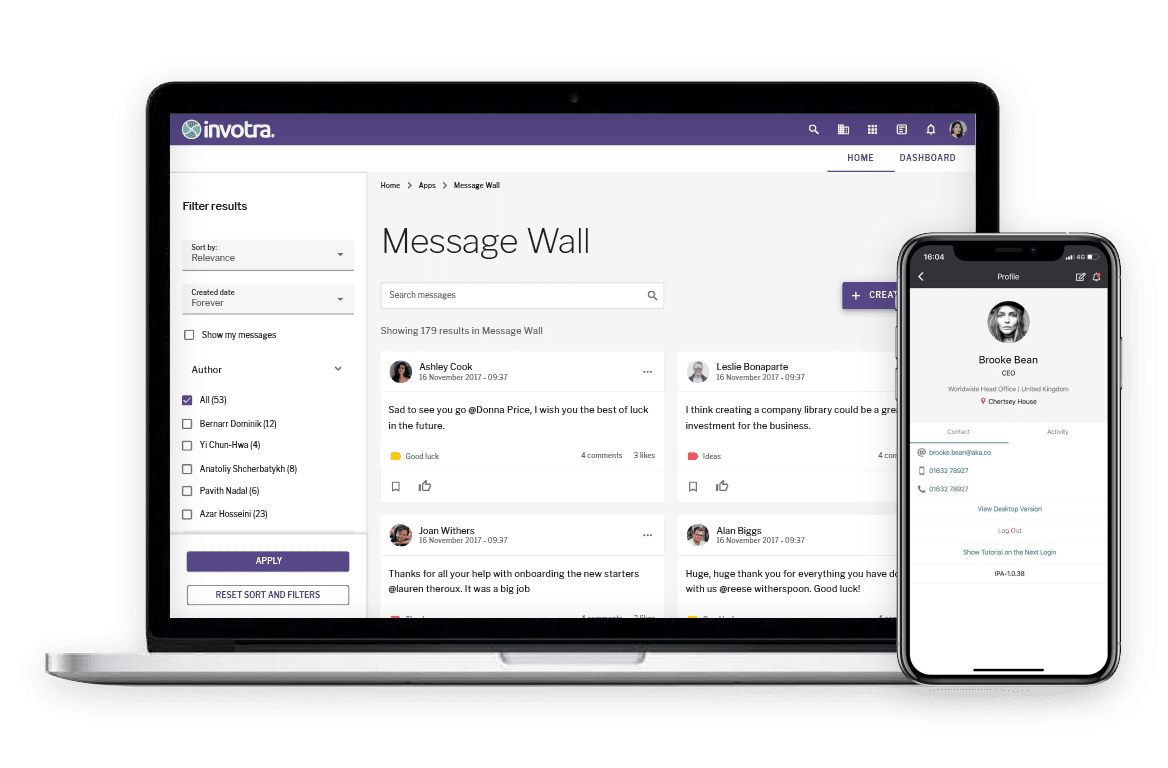

Below is an example of how we would report the data from the performance results.

The advantage of having a report show current and previous data is that it allows us to track how each version impacts the system. Due to this, we can catch a dampen in performance much earlier in the cycle, allowing us time to work out what caused it and prevent the issue.

This means that the end-users do not notice any difference in the site performance from one version to another. This is important as a constant decrease in performance could have a negative impact on the user experience of the product.

In conclusion, there are multiple ways of checking the overall performance of your webpage, but it really comes down to how much reporting you need, or how much time you wish to spend measuring it. The best suggestion I can give is to use a tool and check where the bottlenecks are, allowing you (hopefully) to work on and improve the performance. From here, you can follow up with reporting – allowing transparency between yourself and your customers.